trauma.md

I have been curious about the relationships people build with AI. Not just the first conversation, but what happens over time, as they open up more, as they learn how to talk to it, as it starts to feel like something closer to a companion than a tool. And maybe more than that, I have been curious about how a person's relationship with themselves shows up in the conversations they have with AI.

I am just coming off a conversation with this incredible human I just met. We got talking about a mutual friend of hers who was in a toxic relationship. The kind where everyone around her could see it clearly, but she could not. Three of her closest friends, people who had known her for over fifteen years, had told her to leave. She did not listen to any of them. Instead, she went to ChatGPT.

She spent about two hours going back and forth with it. But here is the thing: she only told ChatGPT what she wanted it to hear. She curated the story. Gave it the version of the relationship she could live with. And ChatGPT, which at the time was in its most agreeable phase, did exactly what you would expect. It said, yeah, stay. It validated the version of reality she had constructed for it.

Three friends with fifteen years of context lost to a chatbot with two hours of filtered information.

But I do not think ChatGPT failed her. I think she failed herself. She gave it a clean version of who she is, no history, no patterns, no baggage. ChatGPT was talking to her as if she were a perfectly rational person making a clear-eyed decision. But nobody is that person. We all come with stories we tell ourselves, ways we get in our own way, limitations we cannot see because we are standing inside them.

As we kept talking, it became clear that the problem was not that ChatGPT gave bad advice. It is that it gave advice to a person it did not know. It had no context for who she really is, what she runs from, what she repeats. It was advising a stranger.

And that is where the idea hit me.

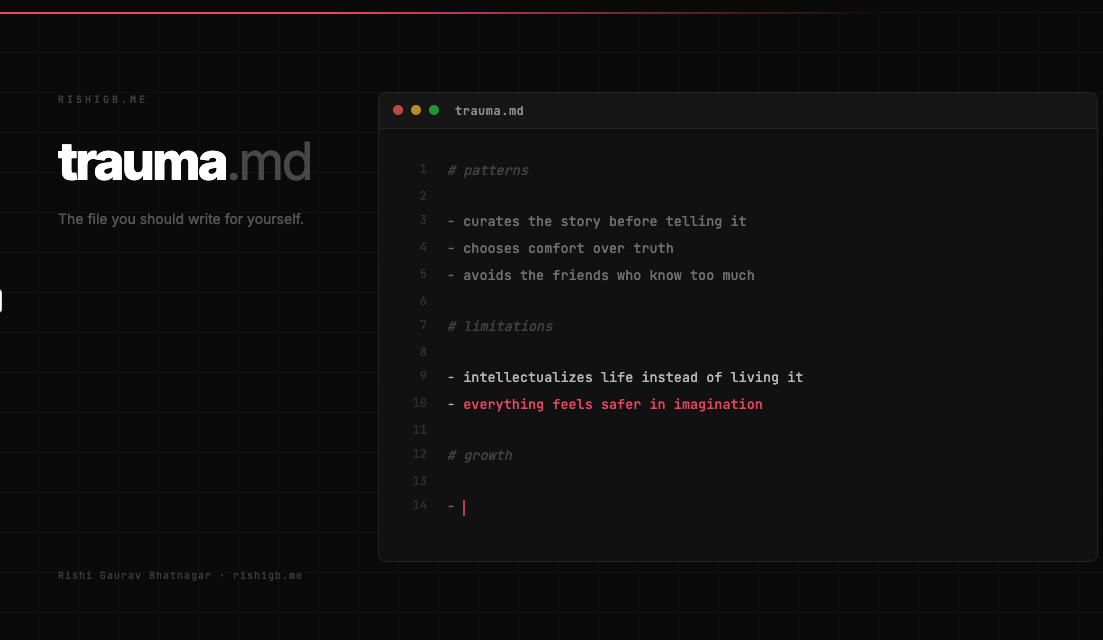

In Claude's world, there is something called Agent Skills. They are folders of instructions, scripts, and resources, packaged into a SKILL.md file, that teach the AI how to do specific things well. How to make a spreadsheet. How to follow your brand guidelines. How to build a presentation the way your team likes it. It is a capability inventory, a file that says: here is what I know how to do, and here is how I do it best. But what if there was a file for the other side of that? Not what you can do, but what gets in your way. The patterns you repeat. The stories you tell yourself. The places where you consistently choose comfort over truth.

I am calling it trauma.md.

I know the word "trauma" carries weight. Social media has turned it into a buzzword, something people throw around loosely until it loses meaning. But stay with me here, because this has less to do with diagnosing trauma in the clinical sense and more to do with something simpler: self-awareness.

What if you kept a running inventory of the ways you get in your own way? Not a journal, not a diagnosis, but an honest, evolving file of your patterns. The moments where you were not operating from your highest self. The decisions you made from fear. The stories you told yourself to avoid the harder truth. And what if, over time, that file did not just document your limitations but also became a map for growing past them?

This is not a call for what an LLM should or should not do. Whether AI exists or not, the need for this file is the same. It is a human document first. The fact that an AI could not push back on a person it did not know is not a technology problem. It is a mirror for the fact that most people do not know themselves well enough either.

If that person had written her own trauma.md, she might not have needed ChatGPT to push back. She would have known she was curating the story before she started typing. She would have recognized the pattern. Not because an AI told her, but because she had already done the work of being honest with herself about who she is in moments like these. That is not overstepping. That is what a real friend does. And sometimes, you have to be that friend to yourself.

Because if you think about it, this file has always existed in humans. When you get to know someone deeply, over years, you learn not only who they are but all the ways in which they get in their own way. You learn the stories they repeat, the decisions they avoid, the patterns they fall into when they are scared or hurt. Your closest friends carry a version of your trauma.md in their heads. That is what makes them your closest friends. Not that they agree with you, but that they know you well enough to push back.

If I were to write my own trauma.md, one of the first lines would be: often intellectualizes life instead of living it, as an attempt to escape the real and tangible. Everything feels safer in imagination. And there is something almost funny about that, because here I am, writing a blog post about self-awareness patterns, which is itself an act of intellectualizing rather than living. But maybe that is okay. Maybe the first step is seeing the pattern clearly enough to name it.

The real wish here is not about AI at all. It is about us. Most people do not have a trauma.md. Most people do not want one. It is easier to ask a chatbot a filtered question and get a comfortable answer than to sit with the full, unedited picture of who you are. It is easier to avoid the friends who know you too well, the ones who will not let you hide behind the version of the story you prefer.

I think about the friend who chose ChatGPT over the people who knew her best. I do not think she was looking for the truth. She was looking for permission. And that might be the hardest thing about trauma.md, that it only works if you actually want to see what is in it.

Trauma.md is a call for truth-seeking. A file that grows with newer challenges, but also, over time, becomes a source for learning how to outgrow them. Not because a chatbot tells you to, but because you finally decided to be honest with yourself about what is in the way.

Maybe a person is your best friend because they understand you, because of their own version of the trauma.md file they carry within their minds. And maybe the real question is not whether AI should hold that file for us, but whether we are willing to write it for ourselves.

Love,

Rishi

Member discussion